Custom Model Training for DeepLens using Caltech-256 Dataset

Table of Contents

1. Background

DeepLens is a stand-alone camera with specialized ML support.

2. Image Classification

There are several pre-trained models for DeepLens. The goal of this project will be to provide a new trained model for used with DeepLens.

https://authors.library.caltech.edu/7694/1/CNS-TR-2007-001.pdf

We introduce a challenging set of 256 object categories containing a total of 30607 images

3. Workflow

- create notebook

- define containers

- get caltech-256

- define the network for training

3.1. Network

{

"type": "ResNet",

"hyperparameters": {

}

}

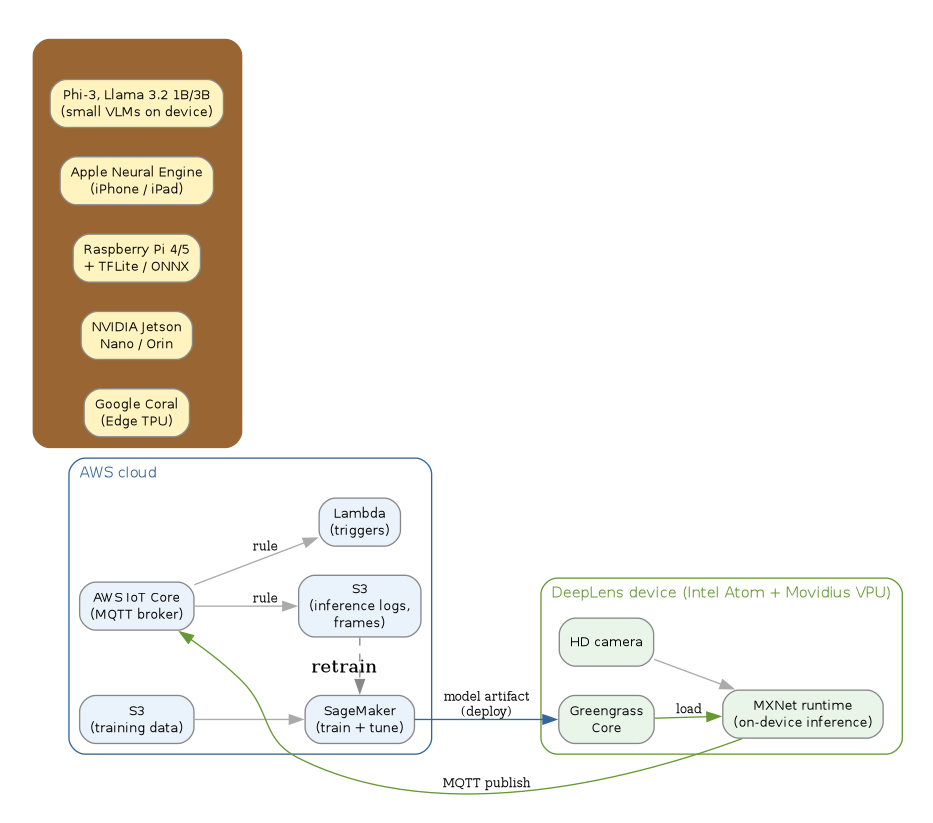

4. Edge ML architecture

// AWS DeepLens (2017-2020) reference architecture — train in SageMaker, // package with Greengrass, serve on the device, stream inference back // to AWS IoT for retraining and Lambda triggers. digraph edge_ml_deeplens { rankdir=LR; graph [bgcolor="white", fontname="Helvetica", fontsize=11, pad="0.3", nodesep="0.3", ranksep="0.45"]; node [shape=box, style="rounded,filled", fontname="Helvetica", fontsize=10, fillcolor="#dbeafe", color="#888"]; edge [color="#aaa"]; // Cloud side subgraph cluster_cloud { label="AWS cloud"; labeljust="l"; color="#1d4ed8"; fontcolor="#1d4ed8"; style="rounded"; sm [label="SageMaker\n(train + tune)", fillcolor="#eaf2fb"]; s3in [label="S3\n(training data)", fillcolor="#eaf2fb"]; s3out [label="S3\n(inference logs,\nframes)", fillcolor="#eaf2fb"]; iot [label="AWS IoT Core\n(MQTT broker)", fillcolor="#eaf2fb"]; lam [label="Lambda\n(triggers)", fillcolor="#eaf2fb"]; s3in -> sm; } // Edge side subgraph cluster_edge { label="DeepLens device (Intel Atom + Movidius VPU)"; labeljust="l"; color="#15803d"; fontcolor="#15803d"; style="rounded"; gg [label="Greengrass\nCore", fillcolor="#eaf5ea"]; cam [label="HD camera", fillcolor="#eaf5ea"]; infer [label="MXNet runtime\n(on-device inference)", fillcolor="#eaf5ea"]; cam -> infer; } // Model packaging + deploy sm -> gg [label="model artifact\n(deploy)", fontsize=9, color="#1d4ed8"]; gg -> infer [label="load", fontsize=9, color="#15803d"]; // Inference results back to cloud infer -> iot [label="MQTT publish", fontsize=9, color="#15803d"]; iot -> s3out [label="rule", fontsize=9]; iot -> lam [label="rule", fontsize=9]; // Retraining feedback loop s3out -> sm [label="retrain", style=dashed, color="#888", constraint=false]; // Successors (the actual 2026 winners) subgraph cluster_post { label="What replaced it (2024+)"; labeljust="l"; color="#a16207"; fontcolor="#a16207"; style="rounded,filled"; bgcolor="#fff7da"; coral [label="Google Coral\n(Edge TPU)", fillcolor="#fff3c0"]; jetson [label="NVIDIA Jetson\nNano / Orin", fillcolor="#fff3c0"]; pi_tfl [label="Raspberry Pi 4/5\n+ TFLite / ONNX", fillcolor="#fff3c0"]; ane [label="Apple Neural Engine\n(iPhone / iPad)", fillcolor="#fff3c0"]; smolvlm [label="Phi-3, Llama 3.2 1B/3B\n(small VLMs on device)", fillcolor="#fff3c0"]; } }

5. Related notes

- 2019 machine learning pipelines — DeepLens was the cloud-trained / edge-deployed instantiation of the pipeline this note documented.

- Kubeflow — server-side analogue; KubeRay + KServe took the same role on the cloud-orchestration side.

- Fine-tuning ML models — small-model fine-tuning made on-device inference viable on commodity hardware after DeepLens.

- scikit-learn — sibling 2019 note on the tabular side of the same era.

6. Postscript (2026)

AWS discontinued DeepLens with the device service ending 31 January 2024. SageMaker Edge Manager, the 2020 successor pitched at the same edge-ML use case, was also deprecated effective 26 April 2024. The actual winners on edge ML were not all-in-one branded devices but components: Google's Coral Edge TPU (USB Accelerator + Dev Board), NVIDIA's Jetson Nano / Orin family, Raspberry Pi 4/5 paired with TensorFlow Lite or ONNX Runtime, and Apple's Neural Engine for iOS ML. The 2024-2025 pivot reframed "edge ML" away from CV-specific classifiers toward small multimodal LLMs running on commodity hardware — Microsoft's Phi-3 (April 2024), Meta's Llama 3.2 1B and 3B (September 2024), and Google's Gemma 2B all targeted on-device inference; the hardware story converged on whatever device the user already owned.