Machine Learning Workflow and Cloud Platforms (2019)

Table of Contents

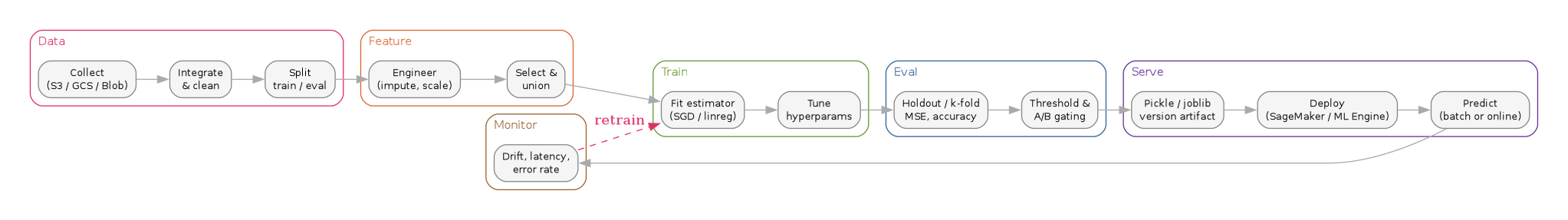

1. Workflow

1.1. scikit-learn

- Load

- Data analysis (numeric vs. categorical)

- Preprocess Pipeline (features union, impute, fill, scale)

- Features, Target

- Estimator (fit)

- Pickle

- Version and Deploy

- Input

- Preprocess Pipeline

- Predict

1.2. AWS (Machine Learning)

https://docs.aws.amazon.com/machine-learning/latest/dg/the-machine-learning-process.html

- Analyze your data

- Split data into training and evaluation datasources

- Shuffle your training data

- Process features

- Train the model

- Select model parameters

- Evaluate the model performance

- Feature selection

- Set a score threshold for prediction accuracy

- Use the model

- Prediction input to S3

- Boto3 batch prediction

- Waiter poll

- Prediction response (S3)

- Clean data source, model, and batch prediction resources

1.3. AWS (SageMaker)

https://docs.aws.amazon.com/sagemaker/latest/dg/how-it-works-notebooks-instances.html

- Explore and Preprocess Data

- Model Training

- Model Deployment

- Validating Models

- Programming Model

1.4. Google (ML Engine)

https://github.com/GoogleCloudPlatform/cloudml-samples

- Model

- Version

- Framework (e.g., scikit-learn)

- Notebook: joblib: model.pkl

1.5. Azure (Cognitive Services)

https://github.com/Azure/MachineLearningNotebooks

- Experiments

- Pipelines

- Compute

- Models

- Images

- Deployments

- Activities

1.5.1. Models

- Azure notebooks

- Load data

- Cleanse data

- Convert types and filter

- Split and rename columns

- Transform data

- Clean up resources

- Train the automatic regression model

- Test the best model accuracy

1.6. IBM

1.7. Oracle

2. Project Pipeline

| Task | Features v2 | Target v2 | Clustering | Model 2 | Model 3 |

| Data Collection | |||||

| Data Integration | |||||

| Data Cleaning | |||||

| Analysis Tools | |||||

| Data Analysis | |||||

| Feature Engineering | |||||

| Pipeline Management | |||||

| Model Training | |||||

| Tuning | |||||

| Model Evaluation | |||||

| Configuration | |||||

| Deployment | |||||

| A/B Testing | |||||

| Resource Management | |||||

| Feature Extraction | |||||

| Target Management | |||||

| Model Deprecation |

// 2019 ML workflow — five-stage pipeline with monitoring → retraining feedback digraph ml_pipeline_2019 { rankdir=LR; graph [bgcolor="white", fontname="Helvetica", fontsize=11, pad="0.3", nodesep="0.3", ranksep="0.45"]; node [shape=box, style="rounded,filled", fontname="Helvetica", fontsize=10, fillcolor="#dbeafe", color="#888", fontcolor="#555"]; edge [color="#888", fontcolor="#555"]; // Stage 1: Data subgraph cluster_data { label="Data"; labeljust="l"; color="#b91c1c"; fontcolor="#b91c1c"; style="rounded"; d1 [label="Collect\n(S3 / GCS / Blob)"]; d2 [label="Integrate\n& clean"]; d3 [label="Split\ntrain / eval"]; } // Stage 2: Feature subgraph cluster_feat { label="Feature"; labeljust="l"; color="#b45309"; fontcolor="#b45309"; style="rounded"; f1 [label="Engineer\n(impute, scale)"]; f2 [label="Select &\nunion"]; } // Stage 3: Train subgraph cluster_train { label="Train"; labeljust="l"; color="#15803d"; fontcolor="#15803d"; style="rounded"; t1 [label="Fit estimator\n(SGD / linreg)"]; t2 [label="Tune\nhyperparams"]; } // Stage 4: Eval subgraph cluster_eval { label="Eval"; labeljust="l"; color="#1d4ed8"; fontcolor="#1d4ed8"; style="rounded"; e1 [label="Holdout / k-fold\nMSE, accuracy"]; e2 [label="Threshold &\nA/B gating"]; } // Stage 5: Serve subgraph cluster_serve { label="Serve"; labeljust="l"; color="#6b21a8"; fontcolor="#6b21a8"; style="rounded"; s1 [label="Pickle / joblib\nversion artifact"]; s2 [label="Deploy\n(SageMaker / ML Engine)"]; s3 [label="Predict\n(batch or online)"]; } // Monitoring (drives feedback) subgraph cluster_mon { label="Monitor"; labeljust="l"; color="#a16207"; fontcolor="#a16207"; style="rounded"; m1 [label="Drift, latency,\nerror rate"]; } // Forward flow d1 -> d2 -> d3 -> f1 -> f2 -> t1 -> t2 -> e1 -> e2 -> s1 -> s2 -> s3 -> m1; // Feedback: monitoring triggers retraining m1 -> t1 [label="retrain", style=dashed, color="#b91c1c", fontcolor="#b91c1c"]; }

4. Versioning

5. Validation

- MSE

- Training Error

- Resubstitution

- Hold-out

- K-fold cross-validation

- LOOCV

- Random subsampling

- Bootstrapping

- Over-Fit

- Confidence

8. Algorithms

8.1. AWS Machine Learning

Four our purposes we are simply using linear regression (squared loss function and SGD)

9. Models

12. Related notes

- scikit-learn — the estimator/pipeline API underpinning the "Workflow → scikit-learn" stage above

- Machine learning design patterns — patterns (feature store, transform, workflow pipeline) that formalise this 2019 sketch

- Kubeflow — the Deployment section's link, expanded into a notes file on pipeline orchestration

- Model interpretability & fairness in fintech — picks up the Exploration step (What-If Tool) and pushes it into production governance

13. Postscript (2026)

The 2019 cloud-by-cloud comparison reads like a museum tour now: the workflow itself survived, but the stack rebuilt around it. The "Project Pipeline" table is roughly what MLOps principles codified by 2021 and what Kubeflow Pipelines and MLflow now provide as off-the-shelf orchestration plus experiment tracking. Feature engineering moved out of notebook scripts into managed feature stores — Feast being the open-source baseline — so the "features union, impute, fill, scale" bullets are now declarative registry entries shared between training and online serving. Data versioning, which 2019 handled via blog posts on pickling models, is now DVC (or LakeFS, or Delta Lake) tracking datasets alongside code in git. The Validation section's MSE / k-fold list still applies to tabular models, but for generative systems the dominant 2026 pattern is LLM-as-judge eval harnesses such as LangSmith or OpenAI Evals, where the "score threshold" is a rubric prompt rather than a number. The cloud-vendor matrix is also flatter: SageMaker, Vertex AI, and Azure ML have converged on the same managed-pipeline + model-registry + endpoint shape, so the interesting choices in 2026 are about feature stores, eval methodology, and agent-tool wiring rather than which cloud's notebook to open.